The rapid ascent of Artificial Intelligence (AI) has triggered a fundamental crisis in computing infrastructure: the “power wall.” As Large Language Models (LLMs) grow in complexity, the hardware required to train and run them is consuming an unprecedented amount of electricity. Traditional silicon-based processors, which rely on the movement of electrons through copper traces, are hitting physical limits in terms of heat dissipation and signal latency. To sustain the next decade of AI advancement, the industry is pivoting toward a paradigm shift: replacing electrons with photons. Photonic chips are no longer a laboratory curiosity; they are becoming an essential architecture for scaling the intelligence of tomorrow.

The Bottleneck of Electronic Interconnects

In a typical AI cluster, thousands of GPUs must communicate simultaneously to process massive datasets. This internal data movement is where the system often chokes. When electrons move through copper wires, they encounter resistance, which generates heat and causes signal degradation. As data rates push beyond 200Gbps per lane, the power required just to move data between chips can exceed the power used for the actual computation.

This “interconnect bottleneck” leads to three primary issues for AI scaling:

- Thermal Throttling: The heat generated by high-speed electrical signals requires massive cooling systems, increasing the Total Cost of Ownership (TCO) for data centers.

- Latency: Electronic signals are subject to RC (resistance-capacitance) delays, which slow down the synchronization required for parallel AI processing.

- Bandwidth Density: Copper cables are bulky and physically limit how many connections can be packed into a server rack, hindering the density needed for hyper-scale AI clusters.

How Photonic Integrated Circuits Solve the Scaling Problem

Photonic chips, or Photonic Integrated Circuits (PICs), address these challenges by manipulating light instead of electricity. Because photons lack mass and charge, they do not generate heat through resistance and can travel at the speed of light with minimal loss. This allows for “Optical I/O,” where data is converted into light directly at the processor level and transmitted across the data center via optical fiber.

The efficiency of a photonic system is largely determined by its ability to modulate light—the process of “shuttering” a laser beam billions of times per second to represent binary data. This is where material science meets computer architecture. While silicon photonics has been the standard, the industry is now moving toward high-performance materials like Thin-Film Lithium Niobate to achieve the ultra-high frequencies required for 1.6T and 3.2T networking.

Why TFLN Chips Are the New Standard for AI

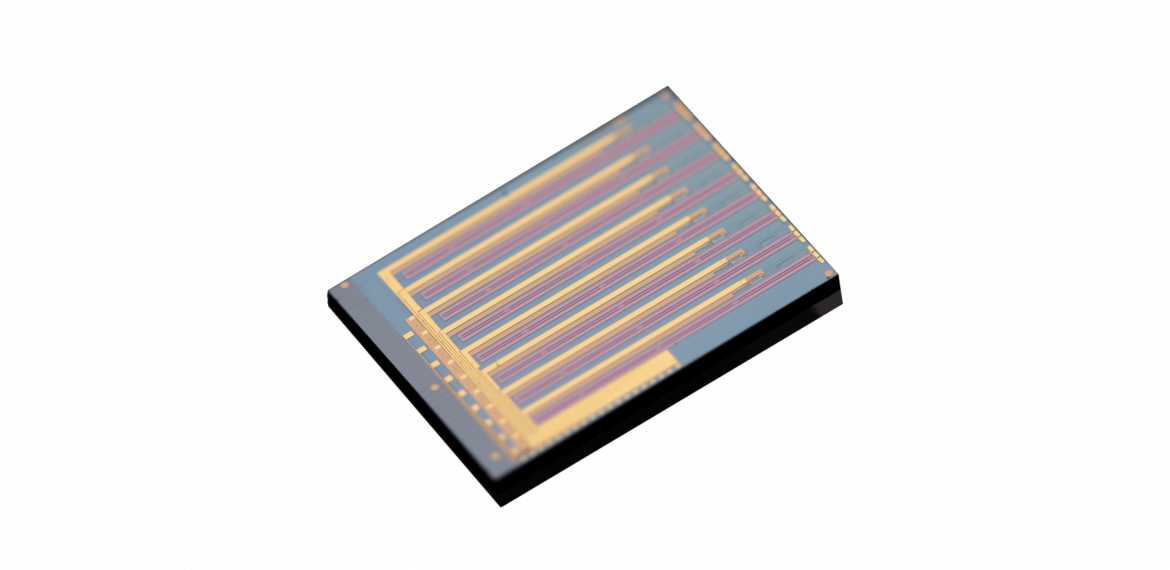

Among the various photonic platforms, TFLN chips (Thin-Film Lithium Niobate) have emerged as the superior choice for high-speed AI interconnects. Lithium Niobate has long been prized for its “Pockels effect,” a physical property that allows light to be modulated with extreme precision and speed. By “thinning” this material into a sub-micron film on a silicon wafer, engineers have unlocked a new class of high-performance devices.

For AI hardware, the advantages of TFLN are transformative:

- Ultra-High Bandwidth: TFLN modulators can easily exceed 70GHz bandwidth, supporting the data rates required for the next generation of AI accelerators.

- Low Driving Voltage: These chips require significantly less power to modulate light compared to traditional materials, directly reducing the energy footprint of the AI cluster.

- Superior Linearity: For coherent optical communications, TFLN provides the high signal integrity necessary to maintain complex data encoding over long distances without errors.

Liobate: Advanced TFLN Solutions for AI Infrastructure

As the demand for specialized AI hardware intensifies, Liobate has positioned itself as a critical partner for B2B enterprises and system integrators. They specialize in the design, fabrication, and packaging of high-performance PICs based on the Thin-Film Lithium Niobate platform. By operating as an Integrated Device Manufacturer (IDM), they provide the scalability and technical precision required for the most demanding photonic applications.

The product lineup offered by Liobate is specifically engineered to solve the scaling challenges of modern AI data centers. Their technology focuses on eliminating historical hurdles such as bias drift and high insertion loss, ensuring that their chips perform reliably in the high-temperature, 24/7 operating environments of AI training clusters.

Precision Specifications for IDM Partners

Liobate provides a range of TFLN chips with specifications tailored for high-speed optical transceivers and co-packaged optics (CPO):

| Product Category | Key Specification (3dB Bandwidth) | Half-Wave Voltage (VΠ) | Application Focus |

| 3.2T DR8 Chip | 110 GHz | < 1.5 V (Differential) | Next-gen Hyper-scale Interconnects |

| 1.6T DR8 Chip | 70 GHz | < 2.0 V (Differential) | 800G/1.6T Optical Modules |

| Coherent PDMIQ | 70 GHz | < 4.5 V (Differential) | Long-haul AI Data Center Interconnects |

| Intensity Modulator | 110 GHz | < 3.0 V | High-precision Test Instruments |

Beyond standalone chips, they offer comprehensive IDM services. Their capabilities include proprietary high-performance packaging that maintains low fiber-chip coupling loss—a critical factor for the energy efficiency of the entire optical link. For B2B clients in the telecommunications and data interconnect sectors, Liobate provides not just the raw components, but a mature fabrication platform that supports high-density photonic integration.

Whether it is for 800G DR4 transceivers or custom-designed PICs for autopilot LiDAR systems, their expertise in TFLN ensures that AI hardware can continue to scale without being grounded by the physical limitations of electricity. As we move toward the 1.6T era, the collaboration between silicon intelligence and TFLN photonics will be a key pathway for the future of computing.